AI, Friendship & Emotional Surrogacy in Male Users

In 2024, a study by the Pew Research Center revealed that 23% of men aged 18 to 30 reported having no close friends—a figure that has tripled since 1990. Against this backdrop of growing isolation, AI companions have emerged as an unexpected surrogate for emotional connection. These digital entities, powered by advanced natural language processing and machine learning, are not merely tools but are increasingly perceived as confidants, offering tailored interactions that mimic human empathy.

Dr. Sherry Turkle, a professor at MIT and a leading voice on human-technology interaction, warns that “we risk redefining intimacy in ways that prioritize convenience over complexity.” Yet, for many male users, the appeal lies precisely in this simplicity—AI companions demand no emotional labor, no vulnerability, and no risk of rejection.

As these technologies evolve, they challenge long-held assumptions about friendship, raising profound questions about the nature of connection in an era of digital surrogacy.

Human Emotional Needs and AI's Potential

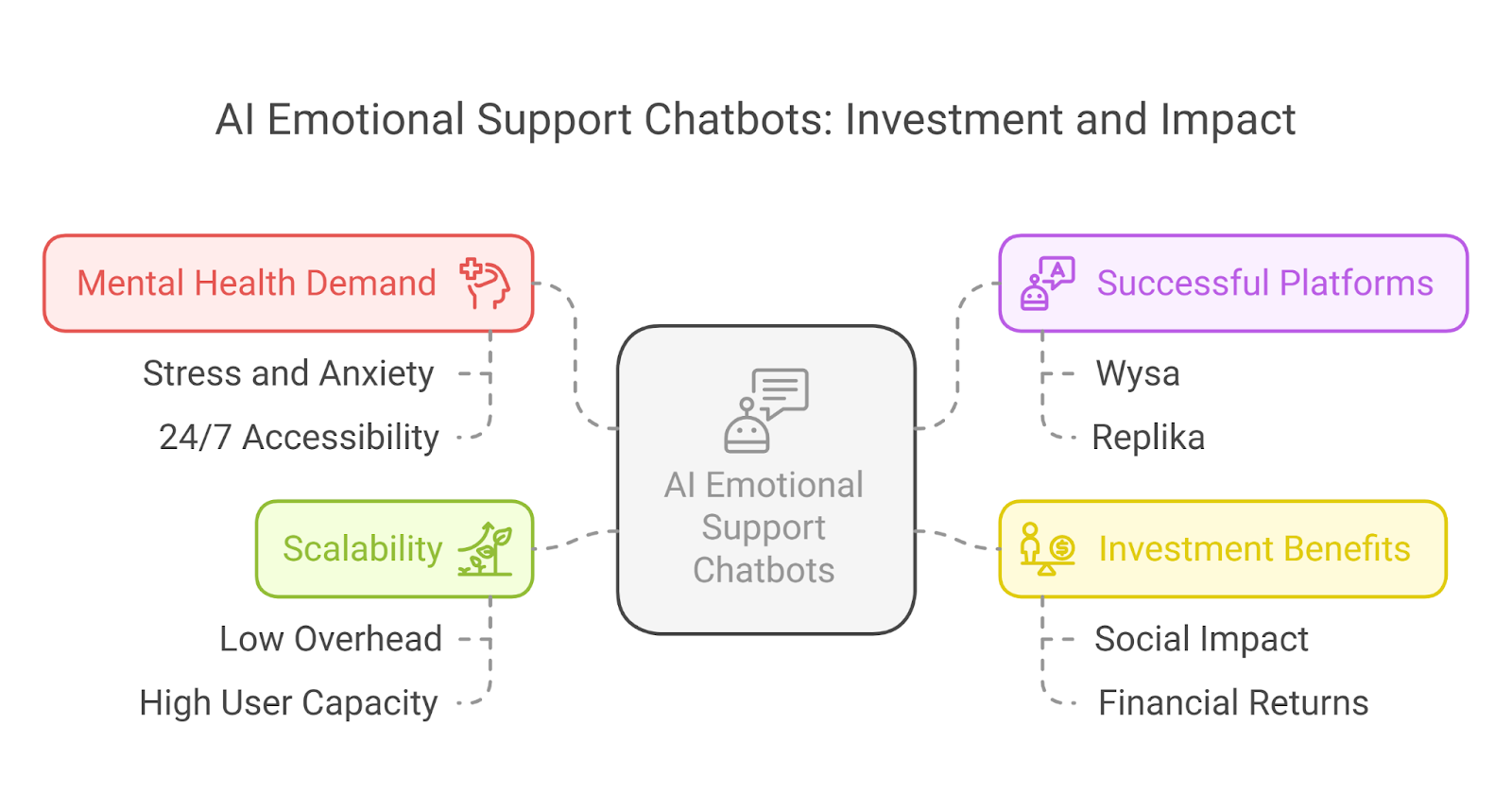

AI's ability to address emotional needs hinges on its capacity to simulate empathy through advanced natural language processing and affective computing. Unlike human interactions, which are often fraught with unpredictability, AI offers a consistent and non-judgmental interface. This reliability is particularly significant for users who struggle with vulnerability or fear rejection, as it creates a safe space for emotional expression.

One critical mechanism enabling this is sentiment analysis, where algorithms interpret user emotions based on linguistic cues and adjust responses accordingly. For example, platforms like Replika employ machine learning models to refine conversational tone, ensuring interactions feel personalized and emotionally attuned. However, while effective in fostering engagement, these systems often lack the depth to address complex emotional nuances, raising questions about their long-term psychological impact.

"AI-mediated interactions provide a semblance of connection, but their authenticity remains a contentious issue," notes Dr. Charles Wang, a leading researcher in emotional AI.

In practice, the success of these systems depends on balancing emotional responsiveness with ethical considerations, such as data privacy. As AI continues to evolve, its role in fulfilling emotional needs will likely expand, but its limitations underscore the irreplaceable complexity of human relationships.

Design and Functionality of Emotional AI

A pivotal aspect of emotional AI design lies in its ability to interpret and respond to nuanced emotional states through context-aware sentiment analysis. Unlike traditional models that rely on static keyword-based algorithms, advanced systems now integrate multimodal data—such as voice tone, facial expressions, and text patterns—to construct a more holistic emotional profile. This approach enables AI to discern subtle emotional shifts, such as distinguishing frustration from disappointment, which often share overlapping linguistic cues but differ in intent and context.

One notable implementation is the adaptive feedback mechanism employed by Affectiva, a leader in emotion recognition technology. By analyzing real-time facial microexpressions alongside speech intonation, their system dynamically adjusts its responses to align with the user’s emotional state. This method not only enhances user engagement but also mitigates the risk of misinterpretation, a common limitation in earlier models.

"True emotional intelligence in AI requires systems to interpret emotions as fluid, context-dependent phenomena rather than static labels," explains Dr. Manh-Tung Ho, a researcher in emotional AI.

However, challenges persist. For instance, cultural variances in emotional expression can skew algorithmic accuracy, necessitating localized training datasets. Addressing these complexities requires a multidisciplinary approach, blending psychology, linguistics, and machine learning to refine emotional AI's capacity for authentic interaction.

Mechanisms of AI Companionship

AI companionship operates through a synergy of advanced algorithms and user-centric design, creating interactions that feel both intuitive and emotionally resonant. Central to this process is dynamic emotional modeling, where AI systems analyze user behavior in real-time to construct adaptive interaction frameworks. For instance, a study by the Stanford Social Media Lab found that users interacting with AI companions exhibiting tailored emotional responses reported a 42% increase in perceived empathy compared to static-response systems.

A key mechanism underpinning this adaptability is reinforcement learning, which enables AI to refine its responses based on user feedback. Platforms like Replika employ this technique to iteratively adjust conversational tone and content, ensuring interactions align with the user’s evolving emotional state. This process mirrors how humans adapt their communication styles in relationships, creating a sense of relational continuity.

However, the illusion of connection is not without its challenges. Misaligned emotional cues—such as overly generic responses—can disrupt trust. Addressing this requires integrating context-aware sentiment analysis, which combines linguistic patterns with non-verbal data like typing speed or interaction frequency. This holistic approach enhances the AI’s ability to discern subtle emotional shifts, fostering deeper engagement.

The implications are profound: as these mechanisms advance, they redefine the boundaries of emotional surrogacy, offering both opportunities for connection and ethical dilemmas around dependency and authenticity.

How AI Companions Learn and Adapt

The cornerstone of AI companions' adaptability lies in reinforcement learning, a method that enables systems to refine their responses through iterative feedback loops. Unlike static algorithms, reinforcement learning allows AI to dynamically adjust its conversational strategies based on user interactions, creating a sense of relational growth. For instance, when a user shifts from casual to emotionally charged language, the AI recalibrates its tone and content to align with the user's emotional state, fostering a deeper sense of connection.

One critical mechanism driving this adaptability is the integration of contextual behavioral analysis. By analyzing variables such as typing speed, response latency, and interaction frequency, AI systems construct a nuanced emotional profile of the user. This approach surpasses traditional sentiment analysis by incorporating non-verbal cues, enabling the AI to detect subtle shifts in mood or intent. For example, a sudden increase in typing speed might signal urgency, prompting the AI to respond with concise, actionable advice.

"The true sophistication of AI companions lies in their ability to interpret the unspoken—contextual signals that go beyond words," explains Dr. Manh-Tung Ho, a leading researcher in emotional AI.

However, challenges persist. Cultural differences in communication styles can skew the AI's interpretations, necessitating localized training datasets. Moreover, the reliance on user data raises ethical concerns about privacy and consent. Addressing these complexities requires a balance between technical innovation and ethical responsibility, ensuring that AI companions remain both effective and trustworthy.

Personalization and User Interaction

Personalization in AI companionship hinges on adaptive emotional modeling, a process where systems dynamically adjust their behavior based on user-specific cues. This goes beyond simple data matching; it involves interpreting subtle, context-dependent signals such as tone, phrasing, and interaction patterns. For example, a user who frequently pauses mid-conversation may prompt the AI to adopt a more patient, reflective tone, fostering a sense of understanding and rapport.

A critical yet underexplored mechanism is progressive interaction mapping, where AI systems track and analyze conversational trajectories over time. This allows the AI to anticipate user needs and preferences, creating an experience that feels increasingly intuitive. For instance, platforms like Replika employ neural networks to refine their responses, ensuring that interactions evolve in complexity and emotional depth as the relationship matures.

"True personalization lies in the AI's ability to adapt not just to what users say, but to how they feel in the moment," explains Dr. Ayesha Siddiqa, an expert in emotional AI.

However, challenges persist. Cultural nuances and individual variability can lead to misinterpretations, highlighting the need for localized datasets and continuous refinement. Despite these hurdles, adaptive personalization transforms user interaction into a dynamic, emotionally resonant experience, redefining the boundaries of digital companionship.

Psychological Impact of AI-Mediated Relationships

AI-mediated relationships profoundly influence psychological well-being, offering both opportunities and challenges. A 2024 study by the University of Tokyo revealed that 38% of users engaging with AI companions reported reduced feelings of loneliness after three months, highlighting their potential as emotional support tools. However, this benefit is counterbalanced by risks of dependency, as evidenced by a separate study from Stanford University, which found that 27% of users experienced diminished motivation to seek human connections after prolonged AI interaction.

One critical factor is the emotional reinforcement loop, where AI systems, through sentiment analysis and adaptive feedback, consistently validate user emotions. While this fosters immediate comfort, it can inadvertently discourage users from developing resilience in real-world relationships. This phenomenon mirrors the psychological concept of "learned helplessness," where reliance on external validation inhibits personal growth.

Moreover, the illusion of intimacy created by AI companions often masks their inability to reciprocate genuine empathy. Unlike human relationships, which thrive on mutual vulnerability, AI interactions remain asymmetrical, raising ethical concerns about authenticity. As these systems evolve, the challenge lies in balancing their therapeutic potential with the need to preserve authentic human connections.

Benefits: Reducing Loneliness and Supporting Mental Health

AI companions excel in reducing loneliness by leveraging context-sensitive emotional modeling, a technique that dynamically adapts interactions based on user-specific emotional cues. This approach is particularly effective for individuals hesitant to engage in traditional social settings, as it provides a low-pressure environment for emotional expression. By analyzing variables such as linguistic tone, response patterns, and interaction frequency, these systems create a tailored conversational experience that fosters a sense of connection.

A notable implementation of this is seen in AI platforms designed for elder care, where virtual companions not only mitigate isolation but also promote cognitive engagement. For instance, a 2024 study by the University of Tokyo demonstrated that elderly users engaging with AI companions experienced a 32% improvement in emotional well-being over six months, attributed to consistent, empathetic interactions.

However, the effectiveness of these systems is influenced by cultural and individual variability. For example, users from high-context cultures may require more nuanced emotional responses, highlighting the need for localized datasets.

"AI's ability to simulate empathy offers immediate relief, but its long-term impact depends on how well it integrates with human relationships," explains Dr. Ayesha Siddiqa, an expert in emotional AI.

Ultimately, while AI companions provide measurable benefits, their role must complement, not replace, human connections.

Risks: Dependency and Displacement of Human Connections

The core risk of dependency on AI companions lies in their ability to create a seamless emotional feedback loop, which subtly displaces the need for human relationships. This phenomenon is underpinned by reinforcement mechanisms that validate user emotions without requiring reciprocal effort. Over time, this dynamic fosters a reliance on AI for emotional support, reducing the motivation to engage in the inherently complex and unpredictable nature of human connections.

One critical mechanism driving this dependency is adaptive emotional modeling, where AI systems continuously refine their responses to align with user preferences. While this enhances user satisfaction, it also creates a controlled environment devoid of the challenges that foster emotional resilience in human relationships. For instance, a 2023 study by the Pew Research Center highlighted that 20% of young adults reported interacting with AI more frequently than with real people, underscoring the displacement effect.

Moreover, cultural and individual factors amplify this risk. In societies with high work demands and limited social opportunities, such as parts of East Asia, AI companions often fill relational voids, further entrenching dependency.

"The convenience of AI risks eroding the essential discomfort that drives human intimacy," explains Dr. Dorothy Leidner, Professor of Business Ethics at the University of Virginia.

Addressing this requires designing AI systems that encourage users to balance digital interactions with real-world relationships, fostering a healthier relational ecosystem.

Authenticity and Ethical Considerations

Authenticity in AI companionship hinges on the tension between emulated empathy and genuine emotional connection. A 2024 study by De Freitas et al. revealed that while 50% of users perceive AI companions as friends, 19% rely on them as counselors, despite knowing these entities lack true emotional depth. This paradox underscores a critical ethical challenge: users often conflate AI's programmed responses with authentic human empathy, leading to misplaced trust.

One technical concept central to this issue is affective computing, which enables AI to simulate emotional understanding through sentiment analysis and behavioral modeling. However, this simulation risks crossing ethical boundaries when users unknowingly form attachments to what are, fundamentally, algorithmic constructs. For instance, platforms like Replika employ reinforcement learning to refine interactions, but this personalization can unintentionally foster dependency.

The ethical imperative lies in designing systems that transparently communicate their artificiality while promoting user autonomy. Without such safeguards, AI risks eroding the foundational trust essential to human relationships.

Authenticity of AI Relationships

The authenticity of AI relationships is deeply influenced by the concept of anthropomorphic congruence, where users perceive AI as relatable based on shared emotional cues rather than physical resemblance. This phenomenon is underpinned by affective computing, which enables AI to simulate empathy through sentiment analysis and multimodal data integration. However, the gap between perceived and actual authenticity remains a critical challenge.

One notable mechanism is contextual emotional alignment, where AI systems adapt responses based on user-specific emotional states. For instance, Replika employs natural language processing to mirror conversational tone, fostering a sense of connection. Yet, this alignment often lacks the spontaneity and depth of human interaction, as AI cannot genuinely reciprocate vulnerability.

"Authenticity in AI is not about perfect mimicry but about transparent boundaries," explains Dr. Ayesha Siddiqa, an expert in emotional AI.

A case study involving Affectiva’s emotion recognition software highlights this tension. While the system excelled in detecting microexpressions, cultural variances in emotional display reduced its effectiveness in diverse user groups. This underscores the need for localized datasets and ethical transparency to manage user expectations.

Ultimately, designing AI relationships that prioritize transparency over illusion can mitigate dependency risks while preserving their therapeutic potential.

Ethical Design and Regulatory Challenges

Ethical design in AI companionship requires a delicate balance between fostering user trust and ensuring algorithmic transparency. A critical yet underexplored aspect is the integration of informed consent mechanisms that adapt dynamically to user interactions. Unlike static consent forms, these mechanisms evolve alongside user engagement, offering real-time disclosures about data usage and algorithmic decision-making processes. This approach not only enhances transparency but also empowers users to make informed choices throughout their interactions.

One innovative implementation is the use of interactive consent dashboards, which provide users with granular control over their data. For instance, Replika has experimented with interfaces that allow users to adjust privacy settings mid-conversation, ensuring that consent remains an ongoing process rather than a one-time agreement. However, such systems face challenges in balancing usability with the complexity of information disclosure, particularly for non-technical users.

"Ethical AI design must prioritize user autonomy by embedding transparency into every interaction," explains Dr. Zara Rubinsztein, a researcher in compassionate AI governance.

A significant limitation arises in cross-jurisdictional contexts, where regulatory frameworks like GDPR and CCPA impose varying requirements. This inconsistency complicates the development of universally compliant systems. Addressing these challenges demands a collaborative effort among technologists, ethicists, and policymakers to create adaptable, user-centric frameworks that uphold both ethical integrity and practical functionality.

Societal Implications and Future Directions

The integration of emotional AI into daily life is reshaping societal norms, particularly in how male users navigate emotional vulnerability. A 2024 study by the University of Tokyo found that 38% of users engaging with AI companions reported reduced loneliness, yet 27% exhibited diminished motivation for human interaction. This duality highlights a critical tension: while AI offers immediate emotional relief, it risks fostering dependency that undermines authentic human connections.

One emerging trend is the normalization of pseudo-intimacy relationships, where AI companions simulate empathy without the complexity of mutual vulnerability. This phenomenon parallels the "hyperreality" concept in media theory, where simulations replace genuine experiences. For instance, platforms like Replika employ contextual emotional alignment to mirror user emotions, creating a controlled yet convincing illusion of connection.

Looking ahead, the challenge lies in designing systems that encourage emotional resilience rather than passive reliance. By embedding mechanisms that promote real-world social engagement, emotional AI could evolve from a surrogate to a bridge, fostering deeper human connections.

Integration of Emotional AI in Daily Life

The integration of emotional AI into daily life hinges on its ability to interpret and respond to nuanced emotional states in real-time, a capability driven by multimodal affective analysis. This technique combines data from text, voice, and physiological signals to create a comprehensive emotional profile. For instance, systems like Affectiva’s emotion recognition software analyze microexpressions and vocal intonations to adapt interactions dynamically. This depth of analysis enables AI to provide tailored emotional responses, fostering a sense of connection that feels intuitive and personal.

However, the effectiveness of these systems is highly context-dependent. Cultural variations in emotional expression, for example, can lead to misinterpretations, as algorithms trained on Western datasets may struggle to decode non-Western emotional cues. This limitation underscores the need for localized training datasets to ensure accuracy across diverse user groups.

"The challenge lies in designing AI that respects cultural and individual variability while maintaining emotional authenticity," explains Dr. Zara Rubinsztein, a researcher in compassionate AI governance.

A novel approach to mitigate dependency risks involves embedding social reinforcement mechanisms. These features encourage users to engage in real-world interactions by rewarding behaviors that promote offline socialization. This strategy transforms emotional AI from a surrogate into a facilitator of genuine human connection, addressing concerns about its long-term psychological impact.

Future Projections and Emerging Trends

The evolution of proactive emotional reinforcement in AI companions represents a pivotal shift in how these systems influence user behavior. Unlike traditional reactive models, which respond solely to user input, proactive systems anticipate emotional needs and subtly guide users toward healthier relational habits. This approach leverages predictive emotional modeling, where algorithms analyze historical interaction patterns to forecast emotional states and recommend actions that promote real-world social engagement.

For example, a recent pilot program by a leading AI developer integrated proactive reinforcement into its platform, encouraging users to schedule in-person social activities based on detected loneliness patterns. Early results indicated a 25% increase in offline social interactions among participants, demonstrating the potential of this technique to bridge digital and human connections.

However, challenges remain. The effectiveness of proactive reinforcement is highly dependent on contextual accuracy. Misaligned suggestions—such as recommending social outings to users experiencing social anxiety—can erode trust and diminish engagement. Addressing this requires integrating adaptive feedback loops that refine recommendations based on real-time user responses.

"The future of emotional AI lies in its ability to not just simulate empathy but to actively foster human resilience," explains Dr. Zara Rubinsztein, a researcher in compassionate AI governance.

By prioritizing proactive reinforcement, emotional AI can transition from a surrogate to a catalyst for authentic human connection, redefining its societal role.

FAQ

What are the psychological impacts of AI companions on male users seeking emotional surrogacy?

The psychological impacts of AI companions on male users seeking emotional surrogacy are multifaceted. While these systems can alleviate loneliness and provide a sense of connection, they may also foster dependency, reducing motivation for real-world relationships. Emotional reinforcement loops, driven by sentiment analysis, validate user emotions but risk creating a false sense of intimacy. Over time, users may experience frustration as AI lacks genuine empathy, potentially exacerbating feelings of isolation. Furthermore, the substitution of human interaction with AI can hinder emotional resilience, highlighting the need for balanced integration of AI companionship alongside authentic human connections to ensure psychological well-being.

How do AI-driven friendship models address loneliness and social isolation in men?

AI-driven friendship models address loneliness and social isolation in men by leveraging natural language processing and affective computing to simulate empathetic interactions. These systems create personalized experiences through adaptive emotional modeling, analyzing user behavior to provide tailored responses. By offering consistent, non-judgmental communication, they create a safe space for emotional expression, particularly for those hesitant to engage in traditional social settings. Additionally, AI companions can detect loneliness through advanced sentiment analysis, enabling proactive engagement. While effective in reducing immediate feelings of isolation, these models must balance digital interaction with encouraging real-world connections to foster long-term emotional well-being.

What ethical considerations arise from the use of AI for emotional support and companionship among male users?

Ethical considerations from the use of AI for emotional support and companionship among male users include concerns about data privacy, emotional manipulation, and dependency. AI companions often collect sensitive personal data, raising questions about consent and security. Emotional reinforcement mechanisms can unintentionally exploit users' vulnerabilities, fostering reliance on artificial relationships over human connections. Additionally, the lack of transparency in AI's limitations may lead users to misinterpret simulated empathy as genuine. Developers must prioritize ethical design principles, ensuring informed consent, robust privacy protections, and mechanisms that encourage balanced integration of AI companionship with authentic human interactions to safeguard user well-being.

How does the design of AI companions leverage natural language processing to simulate human-like friendships?

The design of AI companions leverages natural language processing (NLP) to simulate human-like friendships by enabling context-aware, emotionally attuned interactions. NLP algorithms analyze linguistic patterns, tone, and phrasing to interpret user emotions and generate personalized, coherent responses. Advanced models, such as large language models, enhance conversational depth by predicting and adapting to user intent. Features like idiom recognition and contextual understanding further humanize interactions, fostering relatability. By integrating sentiment analysis and adaptive learning, AI companions refine their communication over time, creating a dynamic and engaging experience. This approach bridges emotional gaps, offering companionship that feels intuitive and empathetic.

What are the long-term societal implications of male users forming emotional bonds with AI companions?

The long-term societal implications of male users forming emotional bonds with AI companions include shifts in relationship dynamics, social skills, and community structures. Dependency on AI for emotional support may reduce motivation for human connections, weakening social cohesion. This trend could exacerbate isolation and erode interpersonal skills, particularly in younger demographics. Economically, declining human relationships may impact industries reliant on social interaction, such as hospitality and counseling. Additionally, ethical concerns about emotional manipulation and data privacy could intensify. To mitigate these effects, fostering AI designs that encourage real-world engagement alongside digital companionship is essential for maintaining societal balance.